P-value: This is the p-value that corresponds to the F-statistic. In essence, it tests if the regression model as a whole is useful. The adjusted R-squared can be useful for comparing the fit of different regression models that use different numbers of predictor variables.į-statistic: This indicates whether the regression model provides a better fit to the data than a model that contains no independent variables. The closer it is to 1, the better the predictor variables are able to predict the value of the response variable.Īdjusted R-squared: Ths is a modified version of R-squared that has been adjusted for the number of predictors in the model. It tells us the proportion of the variance in the response variable that can be explained by the predictor variables. Multiple R-Squared: This is known as the coefficient of determination. In this example, mtcars has 32 observations and we used 3 predictors in the regression model, thus the degrees of freedom is 32 – 3 – 1 = 28. The degrees of freedom is calculated as n-k-1 where n = total observations and k = number of predictors.

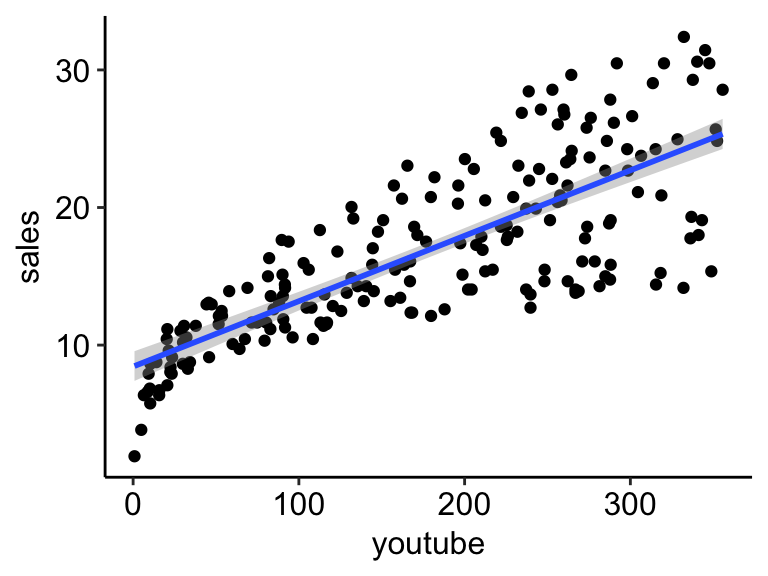

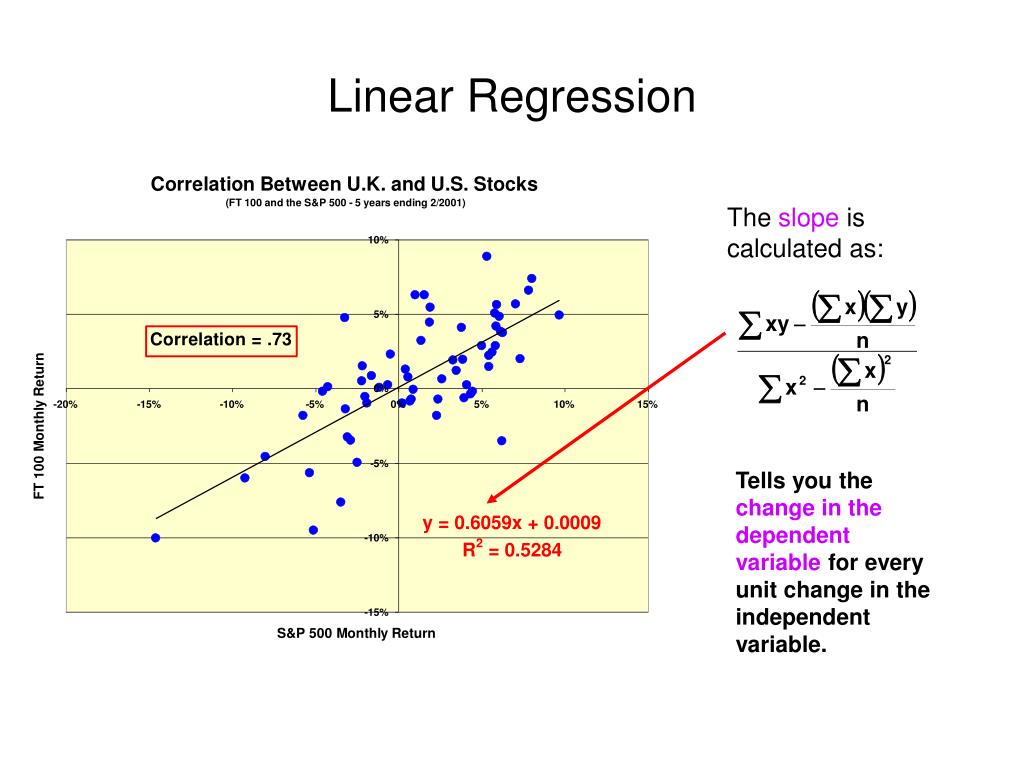

The smaller the value, the better the regression model is able to fit the data. Residual standard error: This tells us the average distance that the observed values fall from the regression line. This last section displays various numbers that help us assess how well the regression model fits our dataset. Assessing Model Fit Residual standard error: 2.561 on 28 degrees of freedom 05 to determine which predictors were significant in this regression model, we’d say that hp and wt are statistically significant predictors while drat is not. 0.05) than the predictor variable is said to be statistically significant. If this value is less than some alpha level (e.g. Pr(>|t|): This is the p-value that corresponds to the t-statistic. T value: This is the t-statistic for the predictor variable, calculated as (Estimate) / (Std. This is a measure of the uncertainty in our estimate of the coefficient. Error: This is the standard error of the coefficient. This tells us the average increase in the response variable associated with a one unit increase in the predictor variable, assuming all other predictor variables are held constant. 03*hp + 1.62*drat – 3.23*wtįor each predictor variable, we’re given the following values:Įstimate: The estimated coefficient. We can use these coefficients to form the following estimated regression equation: This section displays the estimated coefficients of the regression model. The minimum residual was -3.3598, the median residual was -0.5099 and the max residual was 5.7078. Recall that a residual is the difference between the observed value and the predicted value from the regression model. This section displays a summary of the distribution of residuals from the regression model.

Each variable came from the dataset called mtcars. We can see that we used mpg as the response variable and hp, drat, and wt as our predictor variables. This section reminds us of the formula that we used in our regression model.

Lm(formula = mpg ~ hp + drat + wt, data = mtcars) Here is how to interpret every value in the output: Call Call: Residual standard error: 2.561 on 28 degrees of freedom The following code shows how to fit a multiple linear regression model with the built-in mtcars dataset using hp, drat, and wt as predictor variables and mpg as the response variable: #fit regression model using hp, drat, and wt as predictors Example: Interpreting Regression Output in R This tutorial explains how to interpret every value in the regression output in R. To view the output of the regression model, we can then use the summary() command. To fit a linear regression model in R, we can use the lm() command.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed